AI Graphic Generator for Motion Design: What Studios Actually Use

I talk to a lot of other studio directors about their toolsets, and there's a real gap between what the internet says studios are using and what's actually running in production pipelines. The tools that get coverage are often the newest or the most visually impressive in a demo. The tools studios actually depend on are sometimes neither.

The AI graphic generators studios actually use

The tools that appear in real professional motion design pipelines tend to be the ones that integrate well with existing software rather than requiring a complete workflow switch. Photoshop's Generative Fill, Illustrator's text-to-vector features, and Firefly-powered tools get more daily use in working studios than standalone AI image apps that require a separate browser tab.

Midjourney remains the dominant tool for style frame and concept graphic generation at the initial concepting stage. The quality ceiling is high, the prompt responsiveness is well-understood by experienced designers, and the community of professional users means there's extensive shared knowledge about prompting for motion design contexts specifically.

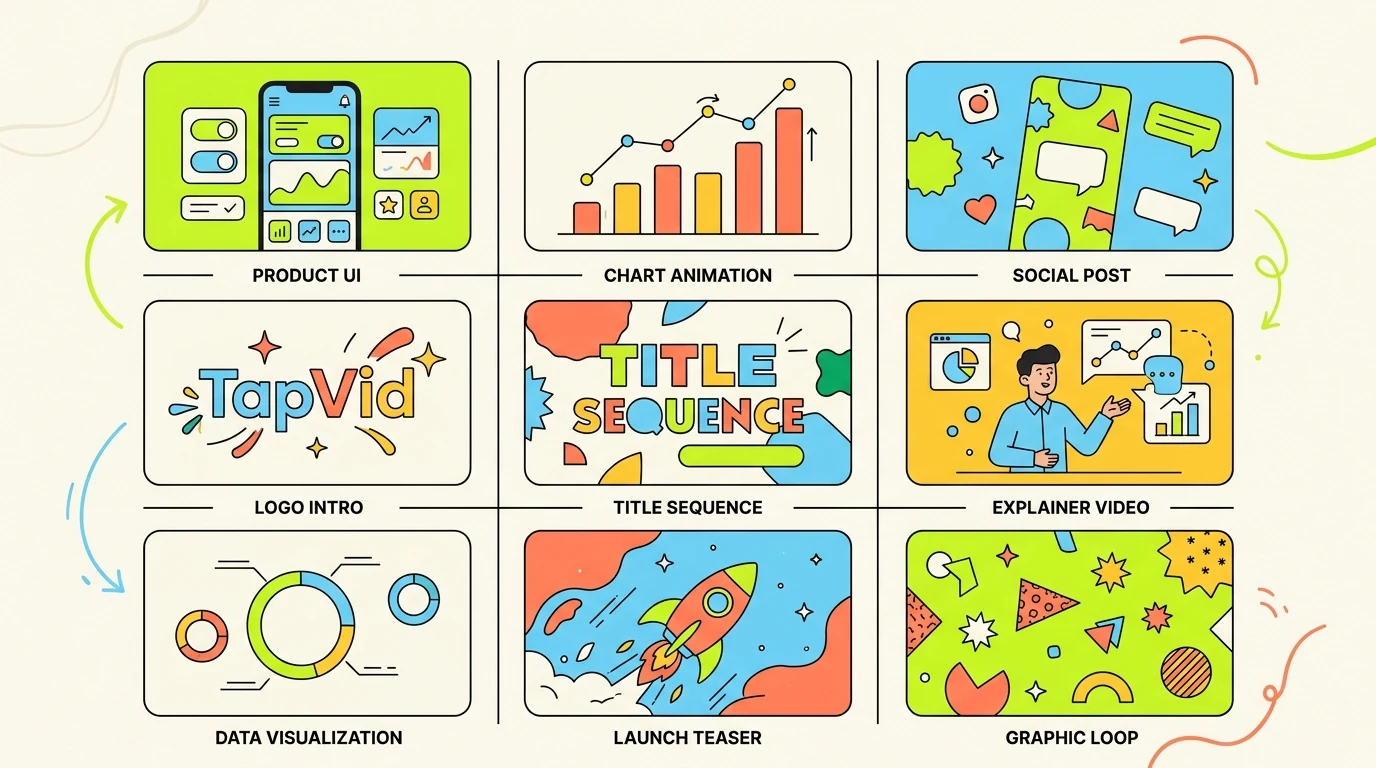

Use cases by production phase

- Concepting: Midjourney for style frames and visual direction. High output quality, well-suited to aesthetic exploration.

- Asset generation: Adobe Firefly for production assets because of commercial licensing clarity — this matters for client work.

- Background environments: Stable Diffusion with ControlNet for precise compositional control over generated environments.

- Texture and surface work: Generative Fill in Photoshop is now the standard tool for texture extension and surface variation.

- Vector and icon work: Illustrator's AI features for generating and adapting icon sets and graphic elements at scale.

- Motion reference: Runway for converting static graphic concepts into rough motion references before full production.

Why commercial licensing clarity matters more than you might think

For client work, the question 'can we legally use this in a commercial deliverable' is the first question, not an afterthought. Several AI graphic generators produce beautiful output but have licensing terms that are ambiguous for commercial use or that require attribution in ways that don't work for client deliverables.

Adobe Firefly's commercial licensing clarity is the main reason it's become the default AI graphics tool in agency environments, even when designers prefer the aesthetic output of other tools. Legal and procurement teams at large clients are increasingly asking about AI tool provenance. Having a clear answer matters.

What studios don't use and why

I hear a lot of interest in DALL-E and GPT-image generation from people outside the industry, but it gets very limited use in professional motion design production. The prompt interface isn't optimized for the kind of iterative, systematic graphic generation that a production pipeline requires, and the output lacks the aesthetic precision that working designers need.

Most single-purpose AI graphic apps that launched in 2023 and 2024 haven't maintained their early position in studio workflows. The tools that consolidated are the ones that solved integration problems — they fit into existing software, they respect commercial use requirements, and they got fast enough to use in a real production context.